Hi, I’m Margaret Simmons, and I’m a graphic designer, artist, and brand consultant.

I’ve been in my field for over 10 years. I’ve worked in management positions in large IT companies, but I found myself in freelancing.

I am happy to share with you useful information about graphic design for beginners and advanced.

Illustrations, interfaces, games, interiors – a designer can work in many different areas. Let’s understand the subtleties of modern design professions and get acquainted with the design of the future. I will tell you about the most popular design professions, what digital artists do, and what you need to know to create useful content.

Graphic Designer

Lorem ipsum dolor sit amet, consectetuer adipiscing elit.

The graphic designer is responsible for visualizing information. He develops corporate identity, makes advertising banners, packaging layouts, and can do the layout of magazines and catalogs. The work of a graphic designer is a lot of creativity, but also enough routine. You will have to carefully cut out objects, clean the pictures from visual debris and pick up colors.

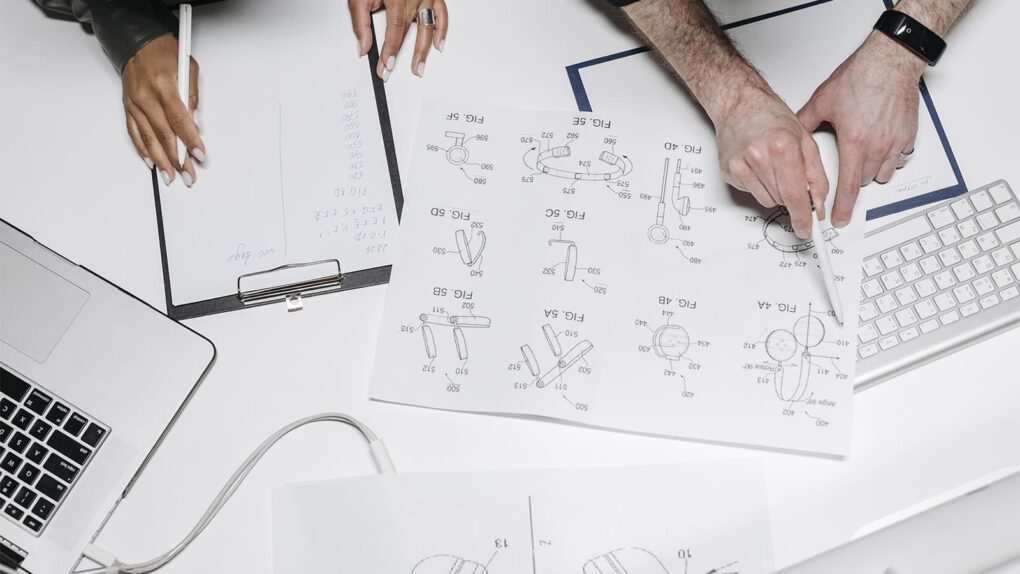

3D designer

3D specialist develops three-dimensional objects: game characters, interior and landscape design objects, etc. With three-dimensional graphics elements of virtual space become three-dimensional and more realistic. In addition to graphics editors 3D-designer needs to master the whole cycle of development of three-dimensional graphics.

Digital Designer

Digital or digital design is a new generation specialty, it combines the basis of graphic design and information technology. A digital designer develops design for websites and mobile applications, digital advertising products.

Designer of the Future

Some design professions might have seemed fantastic a decade ago, but today they are promising and interesting fields for implementing bold ideas. We tell you what to study if you want to learn how to design models of the human body and organs or create virtual worlds of the future.